To do so, we build services that allow us to manage campaigns, optimize and distribute content, and measure the performance of the campaigns at scale. Here we learned how to use the HiveOperator in the airflow DAG.In the Performance Marketing department, we run paid advertisement campaigns for Zalando. The output of the above dag file in the hive command line is as below: Follow the steps for the check insert data task.

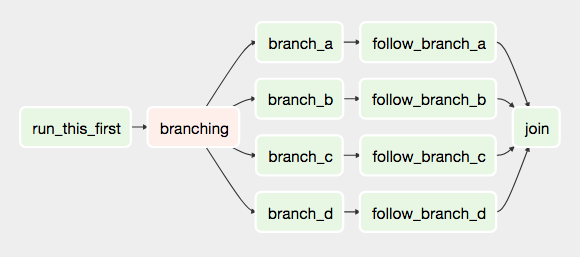

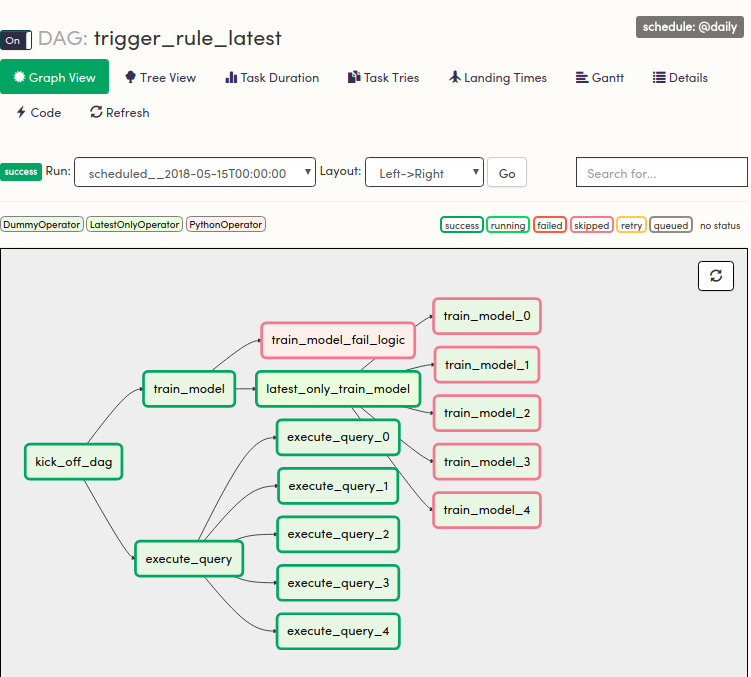

The above log file shows that creating table tasks is a success. To check the log file how the query ran, click on the create table in graph view, then you will get the below window.Ĭlick on the log tab to check the log file. In the below, as seen that we unpause the hiveoperator_demo dag file.Ĭlick on the "hiveoperator_demo" name to check the dag log file and then select the graph view as seen below, we have two tasks: create a table and insert data tasks. Note: Before executing the dag file, please check the Hadoop daemons are running are not otherwise, dag will throw an error called connection refused. Give the conn Id what you want and the select hive for the connType and give the host and then specify the schema name pass credentials of hive default port is 10000 if you have the password for the hive pass the password. Go to the admin tab select the connections then, you will get a new window to create and pass the details of the hive connection as below.Ĭlick on the plus button beside the action tab to create a connection in Airflow to connect the hive. Step 6: Creating the connection.Ĭreating the connection airflow to connect the hive as shown in below And it is your job to write the configuration and organize the tasks in specific orders to create a complete data pipeline. Instead, tasks are the element of Airflow that actually "do the work" we want to be performed. DAGs do not perform any actual computation. A DAG is just a Python file used to organize tasks and set their execution context. The above code lines explain that 1st task_2 will run then after the task_1 executes. Here are a few ways you can define dependencies between them: Here we are Setting up the dependencies or the order in which the tasks should be executed. Insert into dezyre.employee values(1, 'vamshi','bigdata'),(2, 'divya','bigdata'),(3, 'binny','projectmanager'), Here in the code create_table, insert_data codes are tasks created by instantiating.Ĭreate table if not exists dezyre.employee (id int, name string, dept string) The next step is setting up all the tasks, which we want in the workflow. Note: Use schedule_interval=None and not schedule_interval='None' when you don't want to schedule your DAG. We can schedule by giving preset or cron format as you see in the table.ĭon't schedule use exclusively "externally triggered" once and only once an hour at the beginning of the hourĠ 0 * * once a week at midnight on Sunday morningĠ 0 * * once a month at midnight on the first day of the monthĠ 0 1 * once a year at midnight of January 1 # schedule_interval='0 0 * * case of hive operator in airflow', Give the DAG name, configure the schedule, and set the DAG settings # If a task fails, retry it once after waiting Import Python dependencies needed for the workflowįrom _operator import HiveOperatorĭefine default and DAG-specific arguments Recipe Objective: How to use the HiveOperator in the airflow DAG?.Here in this scenario, we are going to schedule dag file, create a table, and insert data into it in hive using HiveOperatorĬreate a dag file in /airflow/dags folder using the below commandĪfter making the dag file in the dags folder, follow the below steps to write a dag file Install packages if you are using latest version airflow pip3 install apache-airflow-providers-apache-hive.Install Ubuntu in the virtual machine click here.Essentially this means workflows are represented by a set of tasks and dependencies between them.īuild a Real-Time Dashboard with Spark, Grafana and Influxdb System requirements : To ensure that each task of your data pipeline will get executed in the correct order and each task gets the required resources, Apache Airflow is the best open-source tool to schedule and monitor Airflow represents workflows as Directed Acyclic Graphs or DAGs. In big data scenarios, we schedule and run your complex data pipelines. Recipe Objective: How to use the HiveOperator in the airflow DAG?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed